Achieving FP32 Accuracy for INT8 Inference Using Quantization Aware Training with NVIDIA TensorRT | NVIDIA Technical Blog

Markus Nagel on Twitter: "Checkout our latest work with @mfournarakis on quantized training which brings us one step closer to achieve efficient on-device training." / Twitter

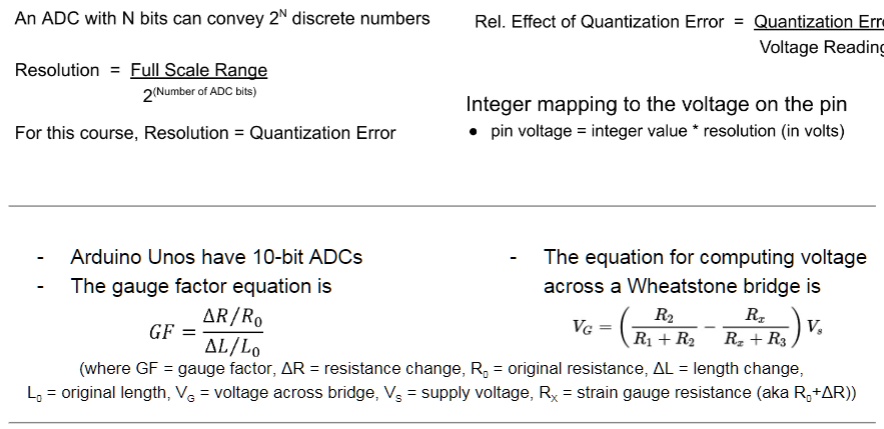

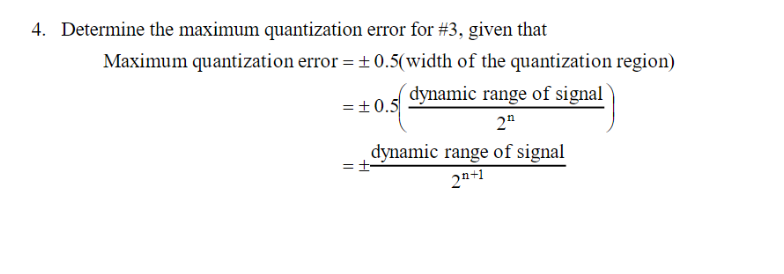

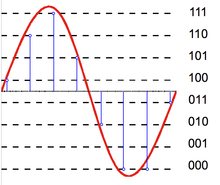

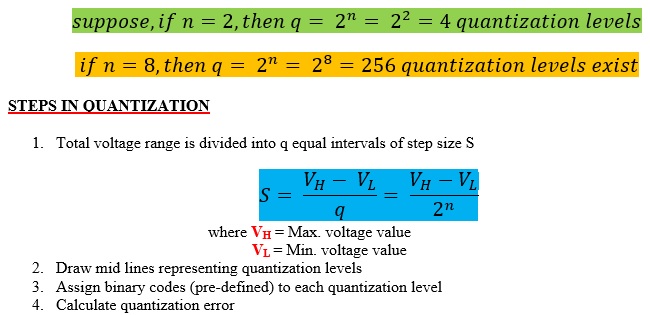

SOLVED: Compute the relative effect of quantization error in the conversion for a 0.180V analog signal with an 8-bit ADC. The ADC has a full scale range (FSR) of 0V to 5V.

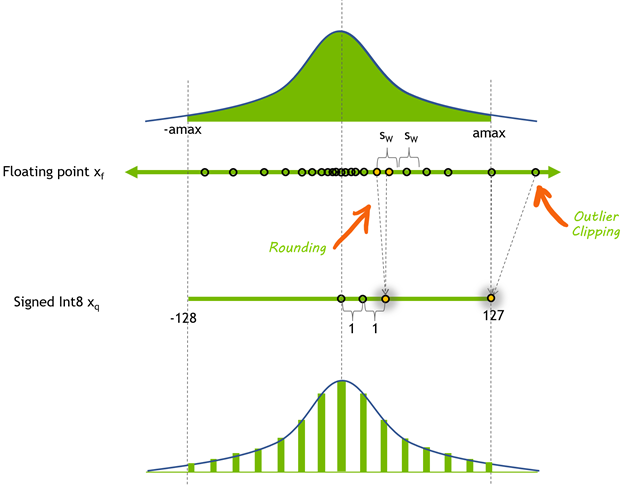

![R] SmoothQuant: Accurate and Efficient Post-Training Quantization for Large Language Models - Massachusetts Institute of Technology and NVIDIA Guangxuan Xiao et al - Enables INT8 for LLM bigger than 100B parameters including R] SmoothQuant: Accurate and Efficient Post-Training Quantization for Large Language Models - Massachusetts Institute of Technology and NVIDIA Guangxuan Xiao et al - Enables INT8 for LLM bigger than 100B parameters including](https://preview.redd.it/r-smoothquant-accurate-and-efficient-post-training-v0-etmfxsu0id1a1.jpg?width=608&format=pjpg&auto=webp&s=130675efbf095112acc39c9c336ea3881936bc2b)

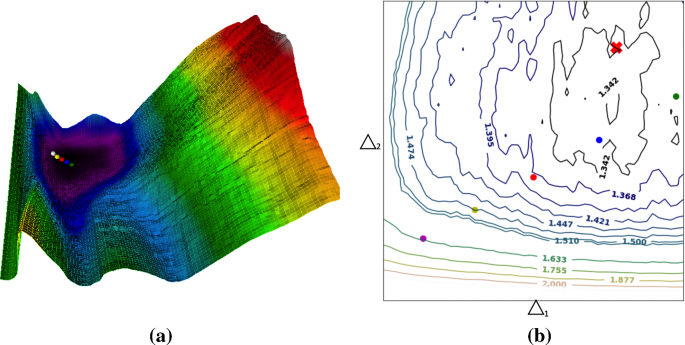

![PDF] In-Hindsight Quantization Range Estimation for Quantized Training | Semantic Scholar PDF] In-Hindsight Quantization Range Estimation for Quantized Training | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/ca97230115c6f1cedba4c8699962f2b2c2e8e9bb/4-Figure2-1.png)

![PDF] In-Hindsight Quantization Range Estimation for Quantized Training | Semantic Scholar PDF] In-Hindsight Quantization Range Estimation for Quantized Training | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/ca97230115c6f1cedba4c8699962f2b2c2e8e9bb/3-Figure1-1.png)